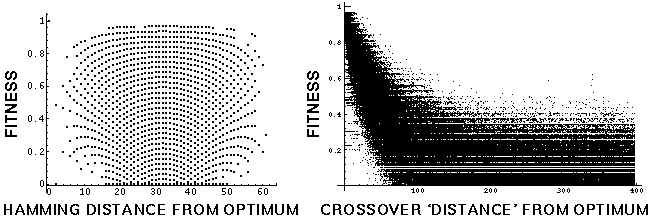

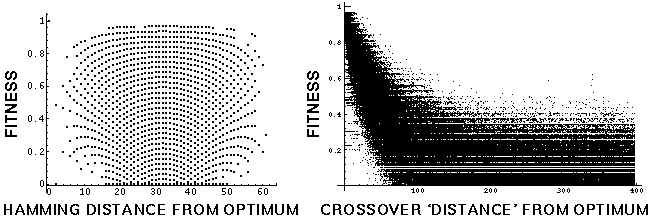

Fitness distance correlation (FDC) has been offered as a summary statistic with apparent success in predicting the performance of genetic algorithms for global optimization. Here, a counterexample to Hamming-distance based FDC is examined for what it reveals about how GAs work. The counterexample is a fitness function that is `GA-easy' for global optimization, but which shows no relationship between fitness and Hamming distance from the global optimum. Fitness is a function that declines with the number of switches between 0 and 1 along the bitstring. The test function is `GA-easy', in that a GA using only single-point crossover can find the global optimum with a sample on the order of 10^-3 to 10^-9 of the points in the search space, an efficiency which increases with the size of the search space. This result confirms the suspicion that predictors for genetic algorithm performance are vulnerable if they are based on arbitrary properties of the search space, and not the actual dynamics of the genetic algorithm. The test function's solvability by a GA is accurately predicted, however, by another property---its evolvability, the probability that the genetic operator produces offspring that are fitter than their parents. It is also accurately predicted by FDC that uses a distance measure defined by the crossover operator itself, instead of Hamming distance. Mutation-based distance measures are also investigated, and are found to predict the GA's performance when mutation is the only genetic operator acting. A comparison is made between Hamming-distance based FDC analysis, crossover-distance based FDC analysis, evolvability analysis, and other methods of predicting GA performance.